Projects

Selected Paper Showcases

My research spans two closely related directions: AI Security and AI for Security.

One track asks how we secure AI systems themselves. The other asks how modern AI can be used to protect people, platforms, and online ecosystems. A third stream focuses on understanding limits, measuring behavior, and evaluating risks across adjacent security settings.

Track 01

AI Security

Defending models, measuring robustness, guiding secure code generation, and designing stronger safety mechanisms for AI systems.

Track 02

AI for Security

Using multimodal and language models to detect abuse, moderate harmful content, and improve online safety operations.

Track 03

Related Studies

Studies that probe model behavior and examine how users perceive risk in security-relevant settings.

Track 01

AI Security

Research on defending AI systems, measuring robustness, guiding secure code generation, and rethinking safety policy where AI and platform governance increasingly intersect.

JBShield

USENIX Security 2025

JBShield defends aligned large language models against jailbreak attacks by inspecting what happens inside the model rather than relying only on surface-level prompt filters. The framework identifies toxic and jailbreak-related concepts in hidden activations, then intervenes on those concepts to preserve the model's refusal behavior under adversarial prompting.

This makes the defense more mechanistic than keyword blocking or prompt-only safeguards. The paper combines representation-level analysis with mitigation and shows that concept-aware intervention can substantially reduce successful jailbreak attacks across diverse LLMs.

SCPGraph

On Submission

SCPGraph addresses a different security challenge: LLMs that generate functional but insecure code. The project encodes secure coding practices as graph structures and uses those graphs to guide LLM reasoning during code generation, grounding the model in concrete security constraints instead of vague instructions to "write safe code."

By operationalizing relationships among secure design rules, the framework helps the model avoid common implementation mistakes and improve secure coding performance on realistic tasks. It offers a practical way to turn security knowledge into structured guidance for AI-assisted software development.

MultimodelRobustness

SKM 2023

This paper studies how vision-language multimodal models behave under robustness stress, focusing on how brittle cross-modal systems can become when their inputs or assumptions shift. It examines the reliability limits of multimodal models before they are relied on in downstream security workflows.

The project is foundational because it surfaces where multimodal systems fail before they are deployed in safety-critical or security-sensitive settings. It also provides an early bridge to later work on multimodal moderation and trustworthy AI behavior.

Track 02

AI for Security

Projects that deploy multimodal LLMs and vision-language models to moderate harmful content, unsafe game ecosystems, and rapidly evolving online abuse.

HVGuard

EMNLP 2025

HVGuard studies hateful video moderation, where harm is often conveyed jointly through speech, visuals, sarcasm, and pacing instead of a single explicit cue. The system combines transcripts and video frames in a multimodal LLM pipeline so the model can reason over cross-modal evidence rather than treating a clip as isolated text or isolated imagery.

This makes the approach more effective for implicit and context-dependent hate in real short-video settings. The project shows how structured multimodal reasoning can improve practical moderation for video platforms where harmful intent is often concealed behind humor, editing style, or audiovisual mismatch.

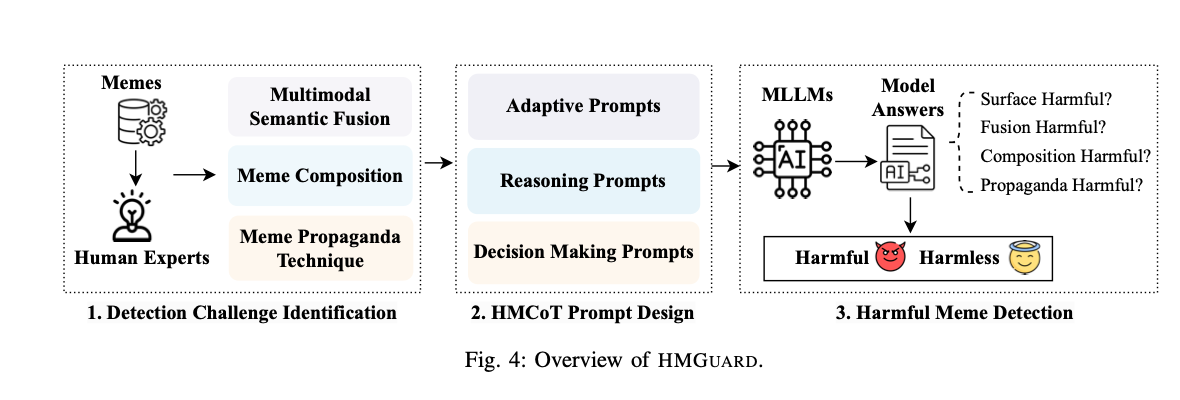

HMGuard

NDSS 2025

HMGuard focuses on harmful memes, a moderation problem where a small amount of text and imagery can hide hateful, harassing, or propagandistic intent. The framework uses multimodal large language models to reason about the relationship between the image, the overlaid text, and the broader social meaning conveyed by the meme.

The work treats meme moderation as an understanding problem rather than a pure classification task. That perspective is important for real-world moderation because harmful meaning often depends on cultural references, visual composition, and subtle multimodal cues that standard detectors miss.

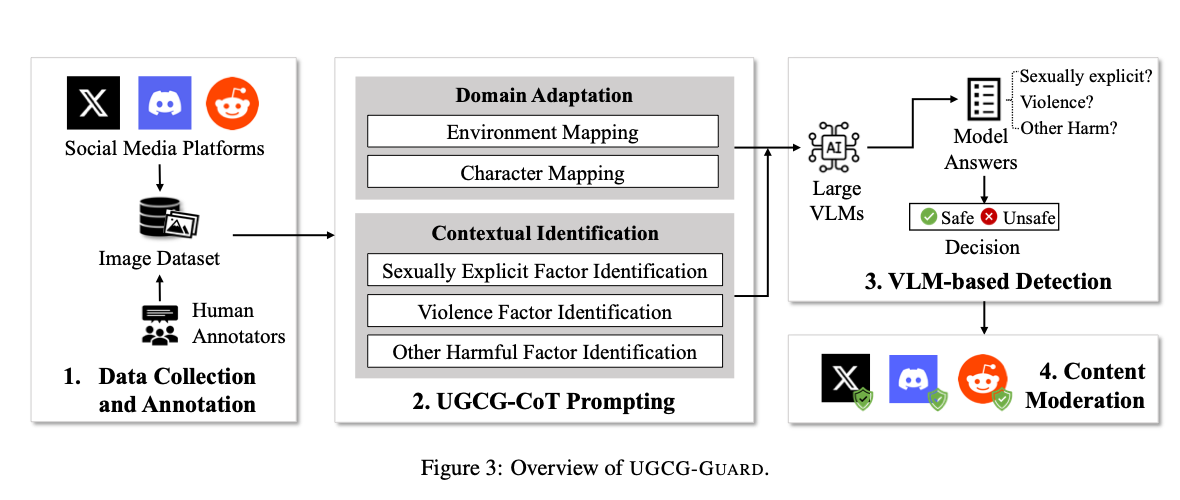

UGCG-Guard

USENIX Security 2024

UGCG-Guard targets promotional content used to lure users into unsafe user-generated content games. The system screens social posts, screenshots, and game-related imagery with large vision-language models to detect sexualized, exploitative, or otherwise illicit promotion before it spreads across the platform ecosystem.

The project is designed for the messy moderation setting around creator-driven games, where harmful content mixes platform-native slang, visual signals, and rapidly shifting promotion styles. It shows how LVLM-based moderation can protect vulnerable users, especially minors, in large UGC game communities.

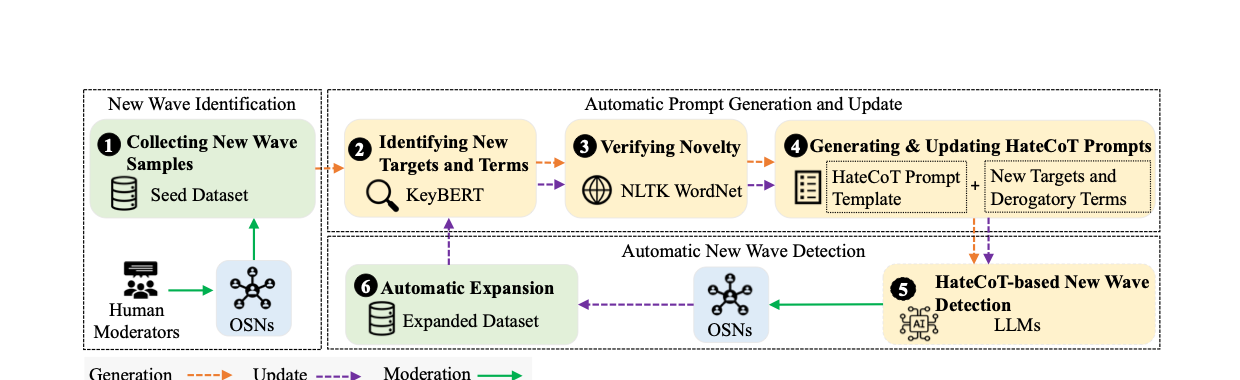

NewWave

IEEE S&P 2024

NewWave studies hate speech that surges around breaking events, where static moderation policies and older classifiers quickly go stale. The paper introduces an LLM-based reasoning framework that captures the narratives, slogans, and contextual references tied to newly emerging hate waves triggered by real-world events.

Instead of assuming that the target classes are fixed, the project treats moderation as a continual adaptation problem. The result is a more responsive way to track event-driven abuse and update detection strategies without retraining a new model from scratch for every shift in online discourse.

Track 03

Related Studies

Studies that analyze model behavior and examine human risk perception without fitting neatly into either of the two primary application tracks.

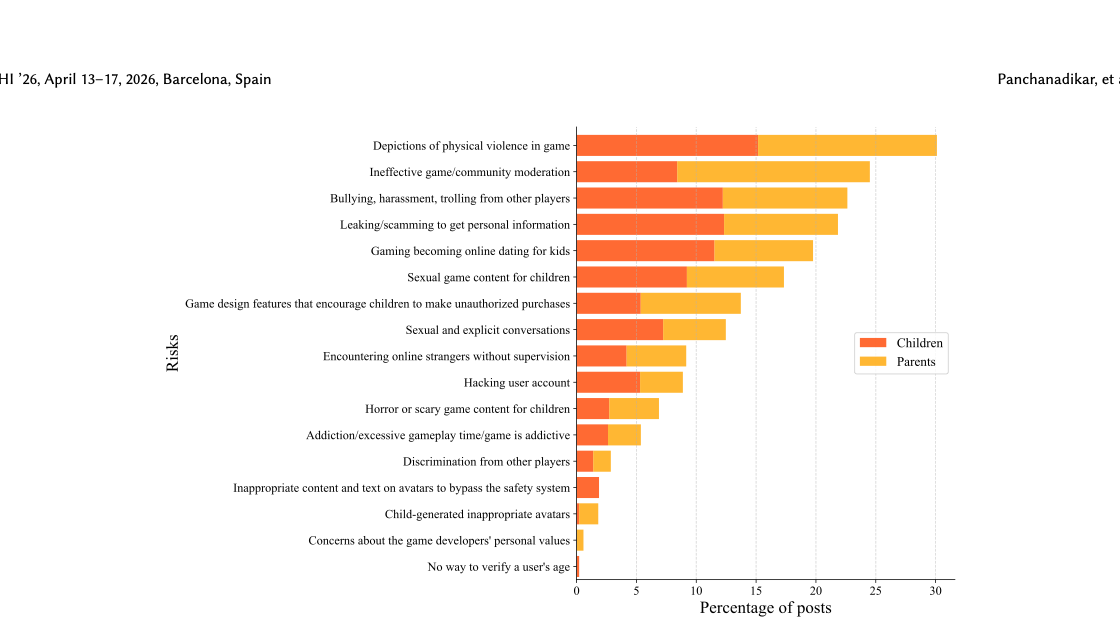

Beyond Age-Based Restrictions

CHI 2026

This project examines children's online safety in user-generated content games by comparing how parents and children perceive risk. Rather than assuming age gates alone are enough, the study highlights the gap between adult oversight models and the types of content, interaction, and social exposure children actually encounter inside creator-driven game ecosystems.

The paper argues for safety interventions that are more context-aware and experience-driven than blanket age-based restrictions. It connects platform design, moderation policy, and lived user behavior in a way that is directly relevant to safer online game environments.

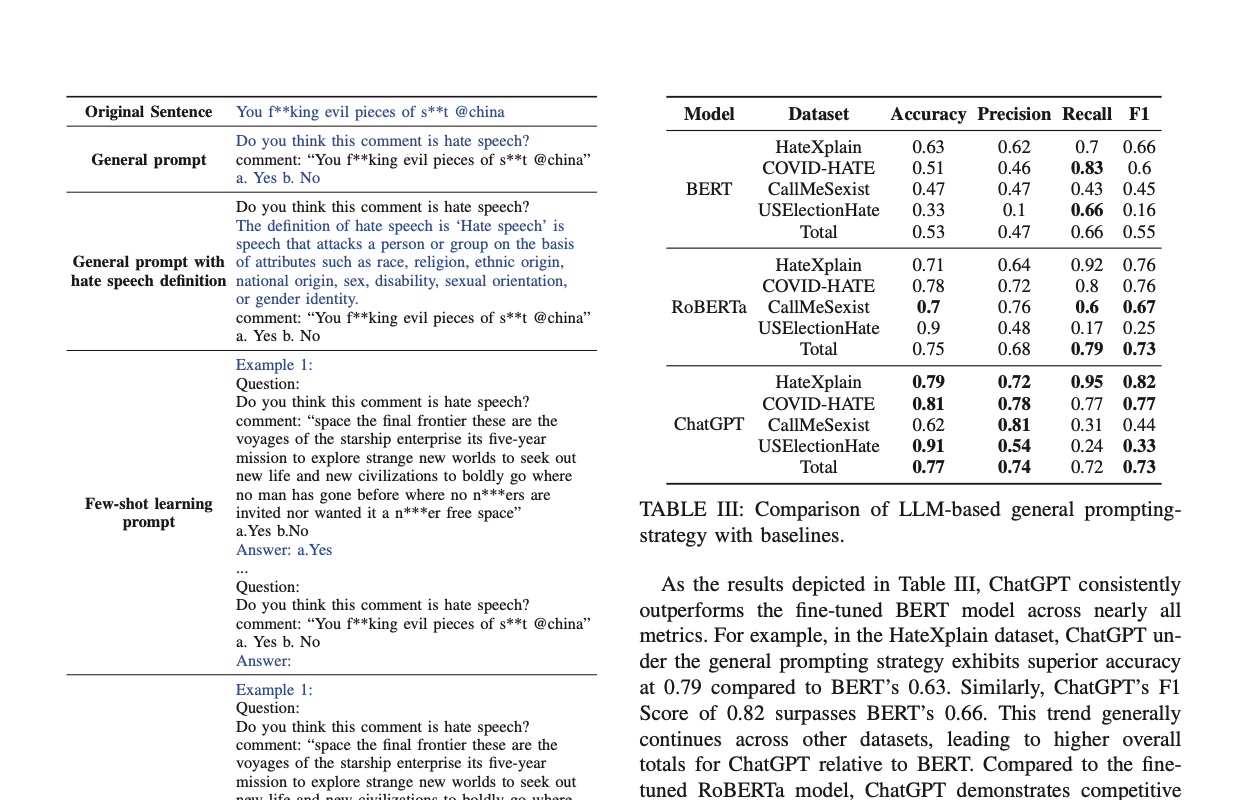

LLM4HateSpeech

ICMLA 2023

LLM4HateSpeech examines whether large language models can reliably detect hate speech in realistic, context-heavy settings. The work compares prompt strategies and studies how contextual clues, task framing, and external knowledge affect LLM judgments on subtle or ambiguous hateful language.

Its contribution is diagnostic as much as empirical: the paper identifies where LLMs help, where they remain brittle, and which prompting strategies make them more useful for moderation. That makes it a strong foundation for later projects on LLM-based online safety systems.